RAG Pipelines in Production: What Nobody Warns You About

Retrieval-augmented generation works in demos. In production, you face chunking strategies, embedding drift, latency budgets, and retrieval quality that degrades over time. Here is what the tutorials skip.

RAG demos are deceptively easy. You chunk a PDF, embed the chunks, store them in a vector database, and retrieve the top-k results at query time. It works on your laptop, impresses in a presentation, and then falls apart in production in ways the demo never hinted at.

The Chunking Problem

Fixed-size chunking — splitting every document into 512-token windows — is the default and usually the wrong choice. A chunk boundary that splits a definition from its explanation destroys retrieval quality. The retrieved chunk is syntactically complete but semantically broken. Semantic chunking, which splits at natural boundaries like paragraph breaks or section headers, produces dramatically better retrieval at the cost of more complex ingestion pipelines.

Embedding Drift

Embedding models change. When you upgrade your embedding model, the vector representations of existing documents are no longer compatible with new query embeddings. You must re-embed your entire corpus. This is not a one-time operation — it is a migration that must be planned, versioned, and executed without downtime. Systems that treat the embedding model as a fixed dependency get burned by this eventually.

Plan for re-embedding from day one. Version your embeddings. Track which model version produced which vectors. This is schema migration for AI systems.

Retrieval Quality Degrades Over Time

As your document corpus grows and ages, retrieval quality silently degrades. Stale documents rank alongside fresh ones. The top-k results become noisier. Without an evaluation pipeline that continuously measures retrieval precision and recall against a ground-truth test set, you will not know this is happening until users complain about answer quality.

Latency Budgets and the Retrieval Tax

Every RAG query adds a retrieval step before the LLM call. That retrieval step — embedding the query, running an approximate nearest-neighbour search, fetching and reranking results — costs time. For synchronous user-facing endpoints, this latency tax is real. Reranking with a cross-encoder adds accuracy but doubles the latency. These are engineering trade-offs that must be measured, not assumed.

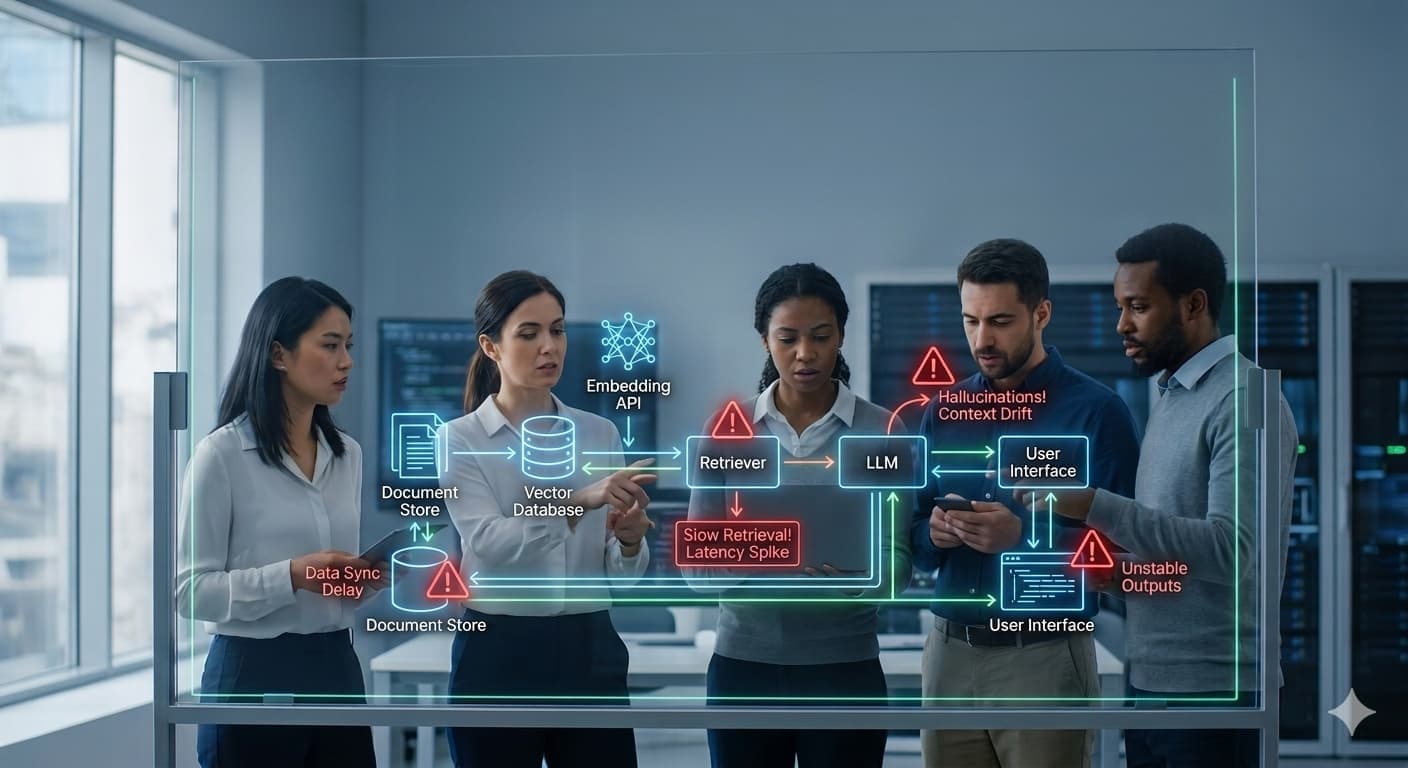

What a Production RAG Pipeline Actually Looks Like

A production RAG system is not a script — it is a pipeline with ingestion, versioning, evaluation, and monitoring stages. Ingestion handles document parsing, chunking, embedding, and upsert with idempotency. Versioning tracks embedding model versions and corpus snapshots. Evaluation runs offline and online metrics against known queries. Monitoring tracks retrieval latency, cache hit rates, and answer quality signals. Build all four stages before you go to production, not after.